5 Technical SEO Signals Behind AI Citations

Why technical SEO now shapes the conditions for AI systems to retrieve, understand, and cite your content

Technical SEO used to be discussed mostly in relation to rankings.

- Can search engines crawl your pages?

- Is the site fast enough?

- Are your canonicals clean?

Those questions still matter, but they are no longer the full story.

As AI search grows, technical SEO is becoming part of a different kind of visibility problem. It is not just about where a page ranks in a search engine. It is about whether that page is easy for AI systems to retrieve, interpret, trust, and use as a source.

That is why Semrush’s study on the technical SEO factors behind AI search is so useful. Based on analysis of 5 million cited URLs, it points to a clearer picture of what strong AI-cited pages tend to have in common.

The most important point is this: technical SEO does not guarantee AI citations. But it increasingly creates the conditions that make citations possible.

In this article we look at the five technical SEO signals that are behind AI citations.

What AI citations actually mean

An AI citation happens when an AI search platform or answer engine uses a page as a source for its response. That can happen in systems like ChatGPT Search, Google AI Mode, Perplexity, and similar tools that synthesize information instead of only listing links.

Being cited is different from simply being indexed. A page can exist on the web and still be overlooked if it is hard to parse, weakly structured, technically inaccessible, or difficult to trust.

The five technical SEO signals at a glance

| Signal | Why it matters |

| Structured data | Schema helps AI systems understand entities, page type, relationships, and context. |

| URL clarity | Descriptive, concise URL slugs make content easier to interpret and retrieve. |

| User engagement | Strong interaction signals often reflect the quality and usability of cited pages. |

| Crawlability | Pages must be technically accessible and easy for crawlers to process. |

| Content structure | Clean formatting, summaries, sections, and Q&A patterns make content easier to surface. |

Why technical SEO matters more in AI search

One of the biggest mistakes people make is assuming AI visibility works like a simple extension of Google rankings. It does not. Traditional search engines rank pages and let users decide which ones to click. AI systems often do more. They retrieve, compare, summarize, and cite.

That means technical SEO starts doing a slightly different job. It is no longer only helping bots discover content. It is helping machines understand what the content is, how it is structured, who published it, and whether it looks dependable enough to use in an answer.

That is why technical SEO now feels closer to infrastructure than optimization. It is the layer that helps AI systems make sense of a page before they ever decide whether to reference it.

1. Structured data helps AI systems understand context

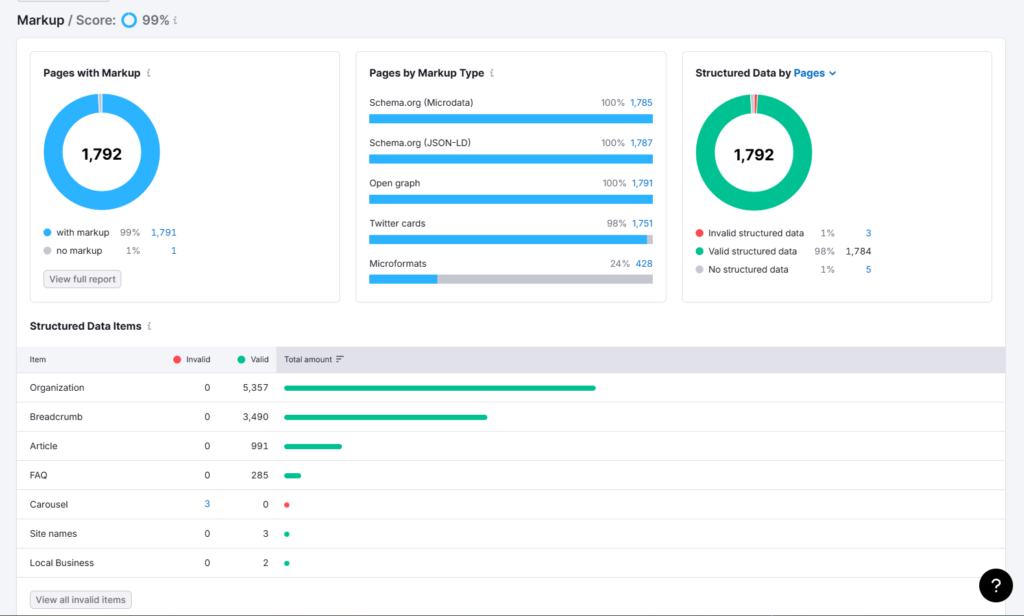

Structured data is one of the clearest signals in the Semrush study. Organization, Article, and Breadcrumb schema appeared most frequently across cited pages, with higher implementation rates on pages cited by Google AI Mode.

That does not prove schema directly causes citations. But it strongly suggests that structured data supports interpretation. It tells machines what kind of page they are looking at, how that page fits into a site, and what entities are involved.

This matters because AI systems do not only need raw text. They need context. If your site clearly identifies its organization, article type, breadcrumb hierarchy, product information, FAQ relationships, and author context, you reduce ambiguity.

For most teams, the right move is not to chase every schema type available. It is to implement the most relevant ones properly and consistently. Organization, Article, BreadcrumbList, FAQ, Product, and LocalBusiness markup are often the strongest starting points depending on the site.

2. URL clarity supports retrieval and interpretation

The Semrush study found that cited URLs most often used concise but descriptive slugs, especially in the 17 to 40 character range. The best-performing cluster was even tighter in some parts of the sample.

This does not mean there is a magical slug length formula. It means clarity matters. Pages with bloated, vague, parameter-heavy, or deeply nested URLs are harder to interpret at a glance and may reflect weaker overall site hygiene.

A good URL should describe the topic without stuffing, confusion, or unnecessary length. It should help both humans and machines understand what the page is about before the page is even opened.

That is one reason URL structure remains underrated. It is not a glamour topic, but it supports the larger pattern AI systems seem to prefer: content that is easier to classify, compare, and trust.

3. User engagement reflects the quality of pages that get cited

The engagement pattern in the Semrush study is especially important because it can be misunderstood. Cited pages tended to show stronger visit duration, lower bounce, more pages per visit, and higher conversion behavior.

That does not mean AI systems are reading your bounce rate directly and deciding to cite you. What it likely means is that the kinds of pages people engage with deeply often share the same qualities that make them more useful to AI systems: clarity, trust, good structure, strong intent match, and better user experience.

In other words, engagement is probably not the direct cause. It is a signal of quality. Pages that work well for people often also work better as sources.

This should shift how teams think about technical SEO. Speed, mobile usability, navigation, hierarchy, and readability are not just UX improvements. They shape the conditions that make stronger engagement possible, and stronger engagement tends to cluster around the pages AI systems cite.

4. Crawlability is a hard requirement, not a nice-to-have

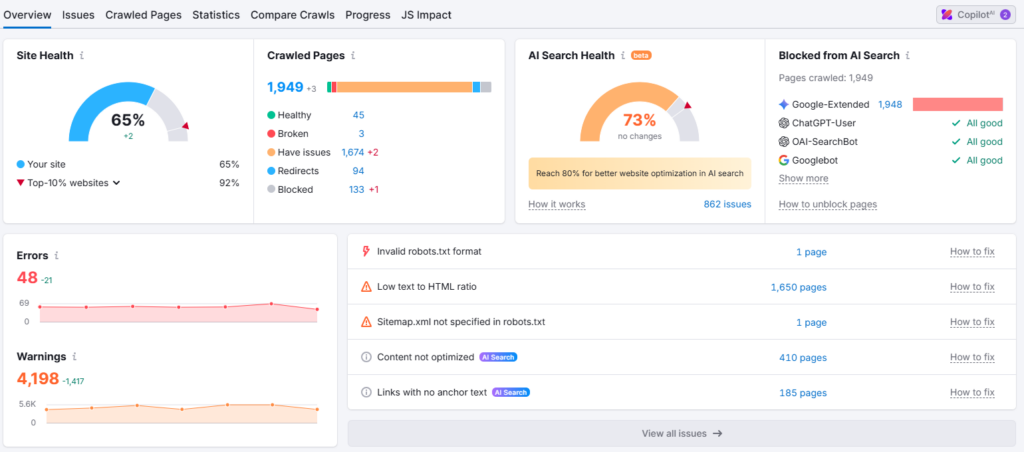

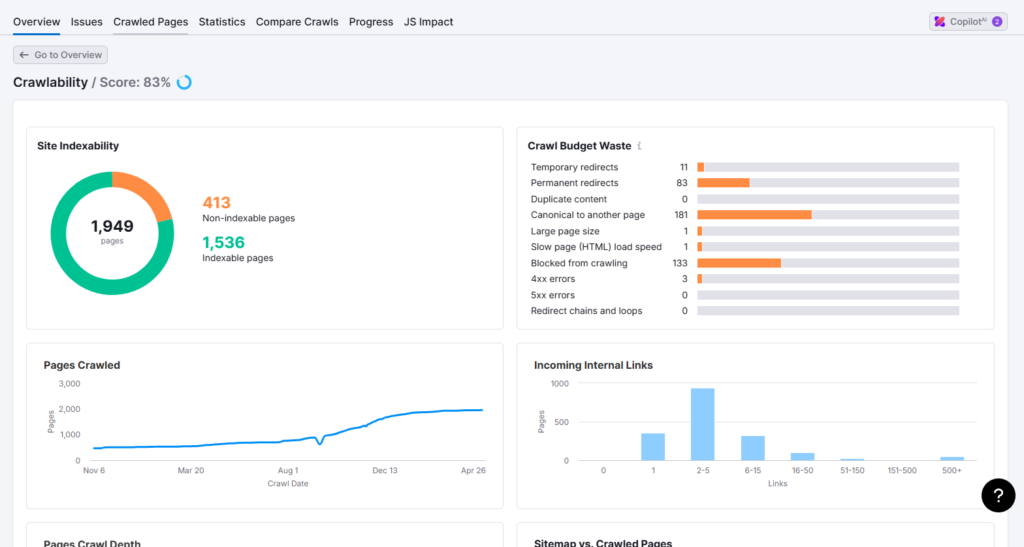

If a page is hard to crawl or parse, it is less likely to become part of an AI answer. This sounds obvious, but it matters more now because the AI search ecosystem includes more crawlers and more retrieval pathways than many teams are tracking.

Semrush’s study highlights a broader shift: technical accessibility is becoming part of AI readiness. Clean HTML, stable page rendering, sensible hierarchy, and server-side rendering where needed all help make content easier for crawlers to process.

JavaScript-heavy sites are especially vulnerable here. If core content depends on rendering pathways that AI crawlers struggle with, that can quietly weaken visibility even when the page looks fine to users in a browser.

This is why crawlability should be treated as foundational. Structured data, content quality, and page authority matter far less if the page cannot be accessed or interpreted reliably in the first place.

5. Content structure makes pages easier to surface and cite

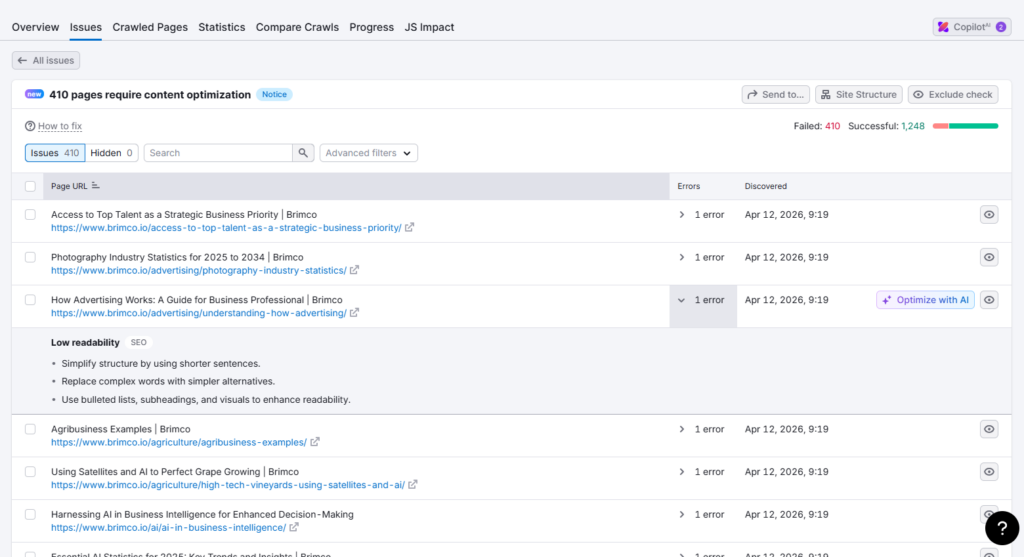

The final signal is one that many teams overlook because it sits between technical SEO and editorial execution. Content structure matters. AI systems tend to work better with pages that are clearly organized, broken into logical sections, and easy to summarize.

That is why Q&A formatting, concise summaries, descriptive subheadings, and direct answers often perform well in AI-driven environments. They make it easier for systems to extract meaning from the page without guessing too much about what the author intended.

This does not mean every article should become a flat FAQ page. It means the structure of a page should reduce friction. Important ideas should be easy to find. Definitions should be explicit. Sections should be coherent. Dense walls of text create more interpretation work for both readers and machines.

In practical terms, strong content structure helps AI systems surface the right section of your page, not just the page itself.

What these five signals have in common

The five signals in the Semrush study share a deeper pattern: they all reduce ambiguity. Structured data clarifies context. URLs clarify meaning. Engagement reflects usefulness. Crawlability ensures access. Content structure supports interpretation.

That is why technical SEO still matters in AI search, but not always in the old way people expect. The point is not to chase one isolated ranking factor. The point is to build pages that are easier for systems to retrieve, interpret, and trust.

That is a much more durable way to think about AI visibility than trying to guess a hidden algorithm.

How to apply this in practice

If you want to act on these findings, start with the foundation. Audit whether important pages are crawlable, server-rendered where necessary, properly structured, and technically stable.

Then tighten the clarity layer. Improve schema on priority templates, rewrite vague slugs, clean up page hierarchy, and add stronger summaries and subheadings where content is currently difficult to parse.

After that, look at the user experience layer. Improve speed, mobile usability, internal linking, and page flow so your strongest pages are not only technically accessible but genuinely satisfying to use.

The teams that benefit most from AI search will usually not be the ones chasing one trick. They will be the ones improving how understandable and usable their pages are as a whole.

Final takeaway

Technical SEO still matters, but the reason it matters is expanding. It no longer supports only rankings. It increasingly supports citation readiness.

That is the real lesson behind the Semrush study. AI systems cite pages that are easier to understand, easier to retrieve, and easier to trust. Technical SEO helps create those conditions.

The practical goal is not perfection. It is clarity. And clarity is becoming one of the most important advantages a website can have in the AI search era.